Approximately Correct is not a political blog in any traditional sense. The mission is not to prognosticate elections, like FiveThirtyEight, nor to revel in the political circus, like Politico. And the common variety political writing seems antithetical to our goals. Today, political arguments tend to follow an anti-scientific pattern of choosing a perspective first and then selectively reaching for supporting evidence. It’s everything we should hope to avoid.

But, per our mission statement, this blog aims to address the intersection of scientific and technical developments with social issues. And social issues -the economy, the environment, healthcare, news curation, et al. – are necessarily political. Moreover, scientific practice requires dispassionate discourse and the ability to change one’s beliefs given new information. In this light, the abstention of scientists from political discourse seems irresponsible.

[An aside: Not all political issues are scientific or technical. The relative value of free speech vs the danger of hate speech may be an intrinsically subjective judgment. But many issues, such as global warming, explicitly exhibit scientific dimensions.]

Technical developments can necessitate policy shifts. Absent the capacity to warm the planet or the ability to detect such warming, one couldn’t justify strong reforms to energy policy. Additionally, absent scientific understanding of the likely effects of policy, one cannot argue effectively for or against them. So sober scientific analysis has a role to play not just in evaluating policies, but also in evaluating individual arguments.

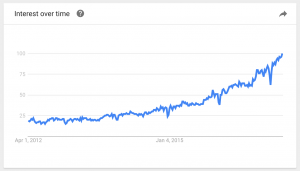

Machine learning and data science interact with politics in a third important way. The political landscapes of entire nations are immense. Take last night’s presidential election for example. Roughly 120 million people voted in 3,007 counties, 435 congressional districts and 50 states. Hardly any citizens have visited every state. Not even the candidates could possibly visit every county. Thus, our sense of the nation’s pulse, and our narratives regarding the driving forces in the election are ultimately shaped by a mixture of second-hand accounts and data science (as by extensive polling).

Simplistic Narratives

Simplistic narratives and data science play off of each other. Narratives influence the questions that pollsters ask. And each poll result invites simplistic analysis. In the remainder of this post, without expressing my personal opinions, I’d like to give a dispassionate analysis of several popular stories that have risen to prominence during this election, sampled from across both the Democratic-Republican and establishment/anti-establishment divide. I choose these narratives neither because they are completely true nor completely false. Each presents a seemingly simple thesis that belies more complex realities. To be as even-handed as possible, I’ve chosen one each from the Clinton-learning and Trump-leaning narratives. Continue reading “The Failure of Simple Narratives”