[This article is a revised version reposted with permission from KDnuggets]

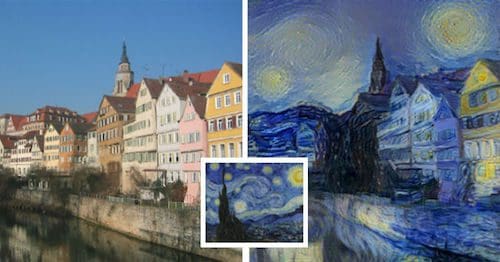

Are deep neural networks creative? Given recent press coverage of art-generating deep learning, it might seem like a reasonable question. In February, Wired wrote of a gallery exhibition featuring works generated by neural networks. The works were created using Google’s inceptionism, technique that transforms images by iteratively modifying them to enhance the activation of specific neurons in a deep net. Many of the images appear trippy, with rocks transforming into buildings or leaves into insects. Several other researchers have proposed techniques for generating images from neural networks for their aesthetic or stylistic qualities. One method, introduced by Leon Gatys of the University of Tubingen in Germany, can extract the style from one image (say a painting by Van Gogh), and apply it to the content of another image (say a photograph).

In the academic sphere, work on generative image modeling has emerged as a hot research topic. Generative adversarial networks (GANs), introduced by Ian Goodfellow, synthesize novel images by modeling the distribution of seen images. Already some researchers have looked into ways of using GANS to perturb natural images, as by adding smiles to photos.

In parallel, researchers have also made rapid progress on generative language modeling. Character-level recurrent neural network (RNN) language models now permeate the internet, appearing to hallucinate passages of Shakespeare, Linux source code, and even Donald Trump’s Twitter eruptions. Not surprisingly, a wave of papers and demos soon followed, using LSTMs for generating rap lyrics and poetry.

Clearly, these advances emanate from interesting research and deserve the fascination they inspire.

In this post, rather than address the quality of the work (which is admirable), or explain the methods (which has been done ad nauseam), we’ll instead address the question, can these nets reasonably be called creative? Already, some make the claim. The landing page for deepart.io, a site which commercializes the “Deep Style” work, proclaims “TURN YOUR PHOTOS INTO ART”. If we accept creativity as a prerequisite for art, the claim is made here implicitly.

In an article on Engadget.com, Aaron Souppouris described a character-based RNN, suggesting that higher sampling temperatures make the network more creative.In this view, creativity reduces to entropy maximization. In other words to be more creative is simple to be more random.

Can we accept a view of creativity which consists primarily of stochasticity? Does it make any sense to accept a definition by which creativity is maximized by a random number generator? If not, is there another sense in which these models could be reasonably described as manifesting creativity?

An opposite view on creativity has long been espoused by deep learning pioneer Juergen Schmidhuber. In his work on computational art, he suggests that low entropy is a defining characteristic of art.

The reduction of creativity to randomness does seem to clash with the notion of creativity we attribute to humans. It seems uncontroversial to label Charlie Parker, Beethoven, Dostoevsky, and Picasso, as creative, and yet they their work is clearly coherent.

In Current Neural Network, Who Supplies the Creativity?

The algorithms that we too frequently anthropomorphize with claims of creativity come in both deterministic and stochastic varieties. First, there are deterministic processes like Inceptionism and Deep Style. Then there are stochastic models, like RNN language models and GANS. These approaches model a probability distribution over the data. And they provide sampling mechanisms for generating new data (images or text).

Note: it’s not hard to imagine trivial modifications to make a deterministic version of a stochastic model. For a language model, we might sample the highest probability phrase only. It’s also not hard to imagine some small modifications that might give rise to stochastic versions of deterministic models. With inceptionism, for example, we could randomly choosing which nodes to enhance.

In the deterministic case, creativity enters the process in two places. First, the creators of Inceptionism and Deep Style themselves are creative. These papers introduced new and captivating tools that did not previously exist. Secondly, the wielders of these tools make creative decisions about what images to process and what settings of the model’s parameters to use. But of course, just as a camera cannot reasonably be described as creative, these tools have no agency. While the capabilities are impressive, with respect to a creative process, these tools function as next-generation Photoshop filters but do not possess creativity of their own.

Assessing the creative capacity of stochastic models seems more difficult. The capacity of GANs and RNNs to generate images on their own, requiring only a random seed creates a deeper illusion of agency. One could set an LSTM to successively generate poems and then sit back and watch as it complies. This might give the illusion of agency, but we should question whether this is any more creative than sampling repeatedly from any probability distribution.

Is Imitation Sufficient to Constitute Creativity?

Recent research papers and blog posts, including some by my pen, have anthropomorphize these models. For example, researchers commonly describe the generative process as an act of hallucination. But I’d argue here that these models, while exciting and promising, do not are doing something fundamentally different from what we usually mean by creativity. That is, these methods are built on top of models that perform imitation. In humans I think we might describe a reliable imitator as capable or facile, but seldom as creative.

Creativity suggests invention. The language model learns to sample from the distribution of rap lyrics it has already seen. The GAN attempts to recover the distribution from which images were sampled. In contrast, creation suggests giving rise to something distinct.

To illustrate the difference between creativity and imitation more closely, we consider the airplane. At one point, there were no airplanes on earth. Then some creative humans invented an airplane. Of course, in a world without airplanes, no amount of random sampling from the distribution of existing technologies could produce an airplane. The decision to build an airplane didn’t come out of thin air. And at some point I imagine the Wright brothers might have reimplemented some existing technology in the course of self-teaching the discipline of engineering. But we only describe the work as creative precisely because of how it stands out against the distribution of things that already existed. Of course, the airplane didn’t stand out in a random way. The airplane stands out as a compelling invention because it revolutionized human transport.

Turning back towards artwork, I perceive that we apply the term most superlatively, not to those individuals who produce copycat works, but to those who demonstrate divergent thinking, producing ideas and art that are simultaneously compelling and yet surprising. This work doesn’t reflect the distribution of previous work, but actually shifts it. It may not have been predictable, but it’s not sampled according to an arbitrary pattern of randomness.

Consider Bach, whose development of counterpoint altered musical composition, or Charlie Parker, whose improvisational language, bebop, altered instrumental improvisation across many decades and diverse styles. In contrast, artists who simply rise to the level of convincing imitation could be more accurately described as masterful than as creative. The papers introducing GANS and Deep Style are themselves creative precisely because they opened new areas of research.

Is it Possible to Build a Creative Machine?

It seems necessary to clarify that I hold no deep-seated antagonism to the idea of a creative machine. As far as I understand the brain, it is itself a creative machine. And I know of no scientifically-grounded argument suggesting that creativity is fundamentally a property of carbon that could not be reproduced in silicon.

I should also point out that there is a growing community of people already doing creative work that incorporates AI. The thesis of this post is not to deny the power of AI as a tool not to deny the creativity of people who use it as such.

But we should hesitate before attributing the human-like creativity to neural network models themselves. While they learn, they learn only to imitate. High quality image generators are fascinating, but it misleads to suggest that they possess agency. Stochastic models that produce realistic output might possess something akin to mastery. But for AI to exhibit creativity, it must do more than imitate.

Perhaps reinforcement learners, upon discovering novel strategies, do exhibit something closer to creativity. AlphaGo achieved superhuman performance at playing GO, discovering strategies that diverged dramatically from those known to human players. Whether or not this system should be called creative strikes me as an interesting question.

There is, however, an important sense in which humans can be considered creative but current reinforcement learners cannot. While RL agents seek optimal strategies for fixed problems, humans additionally exhibit creativity by inventing problems to solve. This notion of creativity seems especially relevant for the arts, where you might say that most creative artists create new sets of aesthetic objectives.

Perhaps one day truly creative AI will arrive. And while we should consider the possibility seriously, we should be wary of premature proclamations of success.

Fascinating piece. For, this begs the question of whether evolution can be considered a “creative” system. There is of course a lot of stochasticity, but also directed selection from stochastic processes of genetic (and phenotypic) variation…

I’m glad I find this. Just wonder whether you have some new ideas about this interesting question.